In the ever-evolving world of computing, artificial intelligence (AI) is no longer a futuristic concept confined to science fiction. From voice assistants that understand our mumbling at 6 AM to self-driving cars that navigate chaotic city streets, AI has become a crucial part of our daily lives. But behind the magic of AI lies an insatiable hunger for computational power—one that traditional computer processors struggle to satisfy. Enter the Neural Processing Unit (NPU), a specialized hardware powerhouse designed to accelerate AI workloads with brain-like efficiency.

What Exactly Is an NPU?

To put it simply, an NPU is a microprocessor that’s tailor-made for handling neural network computations at lightning speed. Unlike CPUs, which are the jack-of-all-trades in computing, or GPUs, which are optimized for graphics and parallel processing, NPUs are the special forces of AI acceleration. They take inspiration from the human brain, mimicking the way neurons process information in parallel, allowing them to perform trillions of operations per second.

But let’s pause for a second—trillions of operations? Yes, you read that right. While your average CPU might be sweating over a handful of tasks, an NPU is juggling massive AI calculations effortlessly, much like a street performer keeping dozens of flaming torches in the air without breaking a sweat.

Why Can’t CPUs and GPUs Handle AI Alone?

For decades, CPUs have been the undisputed champions of computing, capable of managing everything from word processing to complex simulations. However, when it comes to AI, CPUs start to resemble that one friend who insists on doing everything one step at a time—methodical, precise, but painfully slow. AI, on the other hand, thrives on parallelism, requiring thousands (or millions) of simultaneous calculations.

GPUs stepped in to help, bringing their parallel processing superpowers to AI workloads. Originally designed for rendering graphics, GPUs found a second life in deep learning, where their ability to crunch large amounts of data in parallel made them indispensable. However, while GPUs excel at training AI models, they aren’t always the most efficient when it comes to inference—where trained models make real-time predictions.

This is where NPUs shine. Designed exclusively for AI tasks, they execute neural network operations with unparalleled speed and energy efficiency, making them ideal for everything from smart assistants to self-driving cars.

Inside the Brain of an NPU

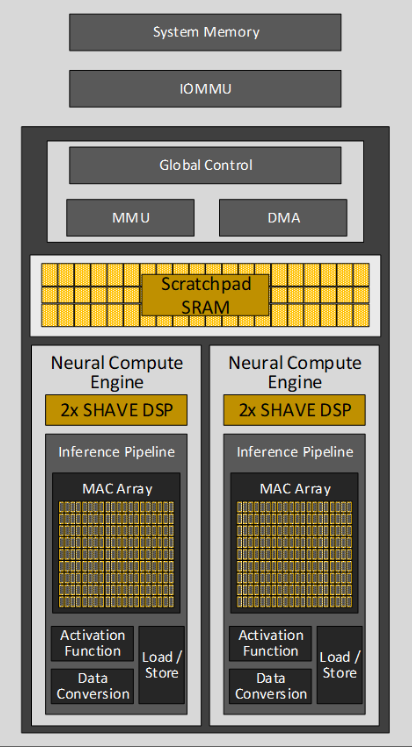

At the heart of every NPU lies an architecture inspired by human neural networks. Unlike traditional processors, NPUs incorporate “synaptic weights”, which help fine-tune AI predictions based on incoming data. This allows NPUs to process information dynamically, continuously learning and adapting—much like how your brain gets better at recognizing a song after hearing it just a few times.

Another clever trick up the NPU’s sleeve is low-precision arithmetic. While CPUs and GPUs often chase extreme precision in calculations, NPUs take a more practical approach—realizing that AI doesn’t always need high precision to be effective. By using lower bit-depth calculations (such as 8-bit instead of 32-bit), NPUs can perform AI inference tasks faster and with less power consumption, a game-changer for mobile and battery-powered devices.

Additionally, NPUs come packed with specialized hardware modules designed specifically for neural network operations, such as matrix multiplication, activation functions, and two-dimensional data transformations. These components allow NPUs to execute deep learning computations at breakneck speeds while keeping power usage in check.

NPUs vs. CPUs vs. GPUs: The Battle of AI Processing

If AI were a battlefield, CPUs would be the seasoned commanders, overseeing every aspect of computing with precision and control. GPUs would be the warriors, executing large-scale parallel operations with brute force. But NPUs? They’re the special ops units—trained, efficient, and optimized for one mission: accelerating AI.

While CPUs struggle with AI due to their limited parallelism and GPUs offer a more capable but power-hungry alternative, NPUs strike the perfect balance. They deliver high efficiency, low latency, and exceptional AI inference performance, making them indispensable for real-time applications like voice recognition, facial authentication, and autonomous navigation.

Importantly, NPUs don’t seek to replace CPUs or GPUs but rather to work alongside them in a heterogeneous computing environment. The CPU handles general tasks, the GPU tackles graphics and some AI training, while the NPU takes care of AI inference—ensuring that every component plays to its strengths.

| Feature | CPU | GPU | NPU |

|---|---|---|---|

| Primary Design Goal | General-purpose computing | Graphics processing, parallel computing | AI and machine learning acceleration |

| Core Architecture | Few powerful cores | Many simpler cores | Specialized cores optimized for neural networks |

| Parallel Processing Capability | Limited | High | Very high, tailored for neural network operations |

| Typical Applications | Operating systems, general software, control tasks | Graphics rendering, gaming, some AI/ML | AI inference, specific AI tasks (image recognition, NLP) |

| Power Efficiency for AI | Low | Medium | High |

| Latency for AI Inference | High | Medium | Low |

Where Are NPUs Making an Impact?

The rise of NPUs is transforming industries far and wide, unlocking new possibilities in AI-driven applications.

In smartphones, NPUs power features like real-time photo enhancements, on-device voice assistants, and augmented reality effects. Ever wondered how your phone’s camera can blur the background in portrait mode or recognize objects instantly? You can thank the NPU for that.

Autonomous vehicles rely heavily on NPUs for real-time decision-making. By processing vast amounts of sensor data (from cameras, lidar, and radar) with minimal delay, NPUs help self-driving cars navigate complex environments safely. When every millisecond counts, an NPU ensures that a vehicle “sees” and reacts to obstacles faster than a human ever could.

The Internet of Things (IoT) is also benefiting from NPUs. Smart home devices, security cameras, and industrial sensors use NPUs to process AI tasks locally, reducing the need for constant cloud connectivity. This means faster responses, improved privacy, and lower bandwidth usage—because not everything needs to be sent to the cloud just to decide whether your living room lights should dim.

In healthcare, NPUs are revolutionizing medical imaging by speeding up AI-powered diagnostics. From detecting tumors in X-rays to analyzing MRI scans in record time, NPUs are helping doctors make faster, more accurate assessments—potentially saving lives in the process.

The Future of NPUs

Looking ahead, the role of NPUs in AI computing is only going to expand. We’re already seeing the integration of NPUs into CPUs and system-on-a-chip (SoC) designs, ensuring that AI acceleration becomes a built-in feature of everyday devices.

Meanwhile, next-generation NPU architectures are being explored, such as in-memory computing, where computations happen directly within memory to cut down energy usage, and optical processors, which use light instead of electricity to perform AI computations at unprecedented speeds.

As AI models become more complex and their applications more diverse, the need for dedicated AI hardware will only grow. NPUs are poised to become a cornerstone of future computing, enabling AI to seamlessly integrate into every aspect of our lives—from intelligent assistants and robotics to cutting-edge scientific research.

The Neural Processing Unit (NPU) represents a monumental leap in hardware design, specifically crafted for the AI-driven era. By drawing inspiration from the human brain and integrating cutting-edge optimizations, NPUs deliver unmatched speed, efficiency, and power savings compared to CPUs and GPUs when it comes to AI tasks.

From smartphones to self-driving cars, medical breakthroughs to IoT automation, NPUs are quietly powering some of the most exciting advancements in modern technology. As AI continues to evolve, the NPU will remain at the heart of intelligent computing, driving the next wave of innovation that will shape our world in ways we’ve yet to imagine.

And who knows? Maybe one day, NPUs will be so advanced that they’ll start writing their own blog posts about the “outdated human brain”—but let’s hope that day is still a long way off. 🚀